一、前言

YOLOv5算法虽然速度很快,但是部署到Jetson AGX Xavier上难以做到实时检测,因此需要在板卡上对YOLOv5进行tensorrt加速,以达到算法在板卡上进行实时检测。本篇文章记录下在Jetson AGX Xavier板卡上加速部署YOLOv5的一个过程。我所使用的系统是Ubuntu18.04,python3.6.9,cuda10.2

二、环境准备

有关搭建YOLOv5的python环境,我在此不再赘述,我是主要参考如下文章进行搭建的。按照如下文章搭建基本不会出现什么问题,如果有问题可以在评论区联系我。

https://blog.csdn.net/qq_40691868/article/details/114379061?spm=1001.2014.3001.5501

https://blog.csdn.net/qq_40691868/article/details/114362278?spm=1001.2014.3001.5501

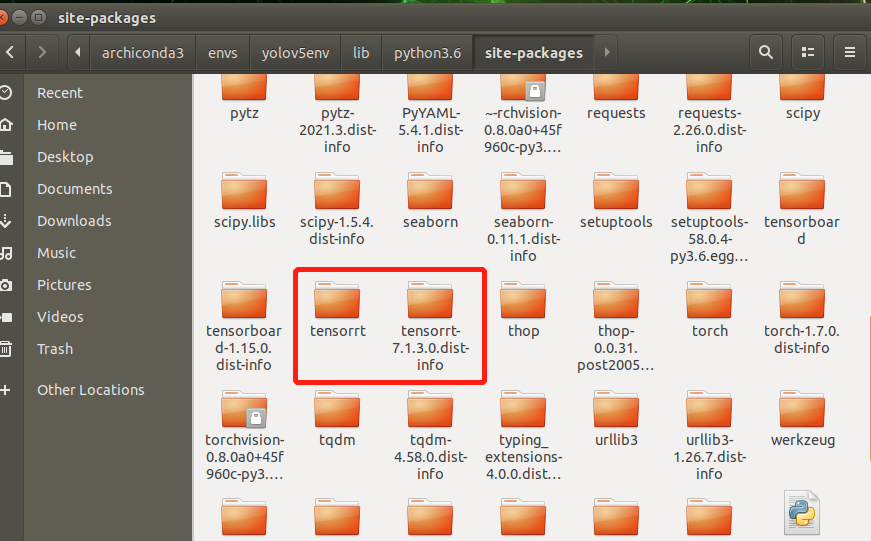

上述过程做完以后,开始导入tensorrt库。方法为将/usr/lib/python3.6/dist-packages/下的关于tensorrt的文件夹,复制到自己所创建的python虚拟环境下site-packages文件夹中。例如我的就是/home/nvidia/archiconda3/envs/yolov5env/lib/python3.6/site-packages

安装pycuda

conda activate yolov5env pip install pycuda

加速步骤

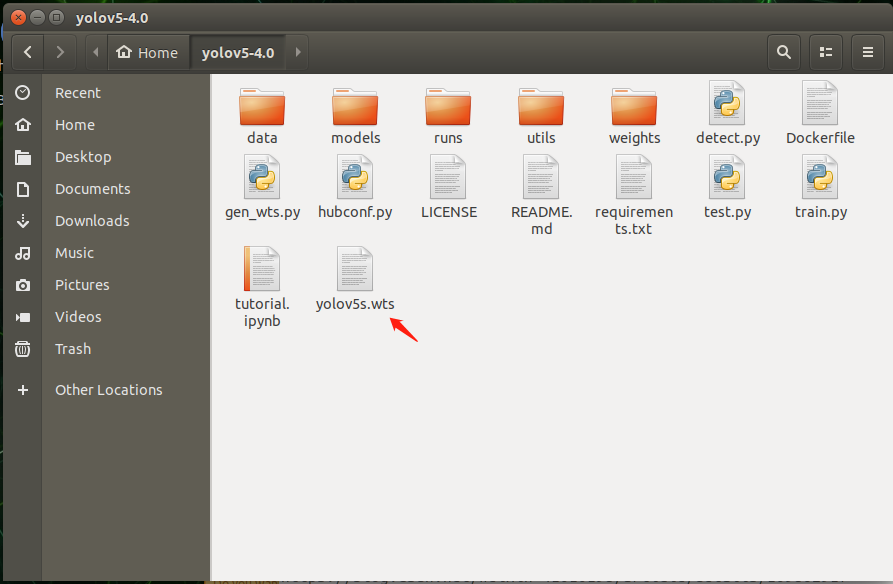

我所使用的模型是YOLOv5s-4.0

克隆相关项目

git clone -b v4.0 https://github.com/ultralytics/yolov5.git git clone -b yolov5-v4.0 https://github.com/wang-xinyu/tensorrtx.git

生成引擎文件

- 将yolov5s.pt复制到yolov5工程下的weights文件夹中

- 将tensorrtx工程中的yolov5文件下的gen_wts.py复制到yolov5工程中

- 生成yolov5s.wts文件

conda activate yolov5env python gen_wts.py

之后进入tensorrtx工程,执行如下命令

mkdir build

将yolov5工程中的yolov5s.wts文件复制到tensorrtx工程的build文件夹中,并在build文件中打开终端,执行如下命令

cmake .. make sudo ./yolov5 -s yolov5s.wts yolov5s.engine s

执行完之后会生成yolov5s.engine文件。

加速检测图片与视频

图片加速检测

在build文件中打开终端,执行如下命令

sudo ./yolov5 -d yolov5s.engine ../samples

可得如下检测结果

可见检测速度还是相当快的

调用网络摄像头进行实时检测

在tensorrtx工程中的yolov5文件夹下创建yolo_trt_test.py,代码内容如下:

"""

An example that uses TensorRT's Python api to make inferences.

"""

import ctypes

import os

import random

import sys

import threading

import time

import cv2

import numpy as np

import pycuda.autoinit

import pycuda.driver as cuda

import tensorrt as trt

import torch

import torchvision

#注意INPUT_W,INPUT_H是640,要和加速前yolov5中detect.py中的img-size值保持一致,否则会出错

INPUT_W = 640

INPUT_H = 640

CONF_THRESH = 0.15

IOU_THRESHOLD = 0.45

int_box = [0, 0, 0, 0]

int_box1 = [0, 0, 0, 0]

fps1 = 0.0

def plot_one_box(x, img, color=None, label=None, line_thickness=None):

"""

description: Plots one bounding box on image img,

this function comes from YoLov5 project.

param:

x: a box likes [x1,y1,x2,y2]

img: a opencv image object

color: color to draw rectangle, such as (0,255,0)

label: str

line_thickness: int

return:

no return

"""

tl = (

line_thickness or round(0.002 * (img.shape[0] + img.shape[1]) / 2) + 1

) # line/font thickness

color = color or [random.randint(0, 255) for _ in range(3)]

c1, c2 = (int(x[0]), int(x[1])), (int(x[2]), int(x[3]))

C2 = c2

cv2.rectangle(img, c1, c2, color, thickness=tl, lineType=cv2.LINE_AA)

if label:

tf = max(tl - 1, 1) # font thickness

t_size = cv2.getTextSize(label, 0, fontScale=tl / 3, thickness=tf)[0]

c2 = c1[0] + t_size[0], c1[1] + t_size[1] + 8

cv2.rectangle(img, c1, c2, color, -1, cv2.LINE_AA) # filled

cv2.putText(

img,

label,

(c1[0], c1[1] + t_size[1] + 5),

0,

tl / 3,

[255, 255, 255],

thickness=tf,

lineType=cv2.LINE_AA,

)

class YoLov5TRT(object):

"""

description: A YOLOv5 class that warps TensorRT ops, preprocess and postprocess ops.

"""

def __init__(self, engine_file_path):

# Create a Context on this device,

self.cfx = cuda.Device(0).make_context()

stream = cuda.Stream()

TRT_LOGGER = trt.Logger(trt.Logger.INFO)

runtime = trt.Runtime(TRT_LOGGER)

# Deserialize the engine from file

with open(engine_file_path, "rb") as f:

engine = runtime.deserialize_cuda_engine(f.read())

context = engine.create_execution_context()

host_inputs = []

cuda_inputs = []

host_outputs = []

cuda_outputs = []

bindings = []

for binding in engine:

size = trt.volume(engine.get_binding_shape(binding)) * engine.max_batch_size

dtype = trt.nptype(engine.get_binding_dtype(binding))

# Allocate host and device buffers

host_mem = cuda.pagelocked_empty(size, dtype)

cuda_mem = cuda.mem_alloc(host_mem.nbytes)

# Append the device buffer to device bindings.

bindings.append(int(cuda_mem))

# Append to the appropriate list.

if engine.binding_is_input(binding):

host_inputs.append(host_mem)

cuda_inputs.append(cuda_mem)

else:

host_outputs.append(host_mem)

cuda_outputs.append(cuda_mem)

# Store

self.stream = stream

self.context = context

self.engine = engine

self.host_inputs = host_inputs

self.cuda_inputs = cuda_inputs

self.host_outputs = host_outputs

self.cuda_outputs = cuda_outputs

self.bindings = bindings

def infer(self, input_image_path):

global int_box, int_box1, fps1

# threading.Thread.__init__(self)

# Make self the active context, pushing it on top of the context stack.

self.cfx.push()

# Restore

stream = self.stream

context = self.context

engine = self.engine

host_inputs = self.host_inputs

cuda_inputs = self.cuda_inputs

host_outputs = self.host_outputs

cuda_outputs = self.cuda_outputs

bindings = self.bindings

# Do image preprocess

input_image, image_raw, origin_h, origin_w = self.preprocess_image(

input_image_path

)

# Copy input image to host buffer

np.copyto(host_inputs[0], input_image.ravel())

# Transfer input data to the GPU.

cuda.memcpy_htod_async(cuda_inputs[0], host_inputs[0], stream)

# Run inference.

context.execute_async(bindings=bindings, stream_handle=stream.handle)

# Transfer predictions back from the GPU.

cuda.memcpy_dtoh_async(host_outputs[0], cuda_outputs[0], stream)

# Synchronize the stream

stream.synchronize()

# Remove any context from the top of the context stack, deactivating it.

self.cfx.pop()

# Here we use the first row of output in that batch_size = 1

output = host_outputs[0]

# Do postprocess

result_boxes, result_scores, result_classid = self.post_process(

output, origin_h, origin_w

)

# Draw rectangles and labels on the original image

for i in range(len(result_boxes)):

box1 = result_boxes[i]

#这里我做了一个if判断,根据自己的需求检测所需要的类

if categories[int(result_classid[i])] in ["person", "car", "bus", "truck"]:

plot_one_box(

box1,

image_raw,

label="{}:{:.2f}".format(

categories[int(result_classid[i])], result_scores[i]

),

)

return image_raw

# parent, filename = os.path.split(input_image_path)

# save_name = os.path.join(parent, "output_" + filename)

# # Save image

# cv2.imwrite(save_name, image_raw)

def destroy(self):

# Remove any context from the top of the context stack, deactivating it.

self.cfx.pop()

def preprocess_image(self, input_image_path):

"""

description: Read an image from image path, convert it to RGB,

resize and pad it to target size, normalize to [0,1],

transform to NCHW format.

param:

input_image_path: str, image path

return:

image: the processed image

image_raw: the original image

h: original height

w: original width

"""

image_raw = input_image_path

h, w, c = image_raw.shape

image = cv2.cvtColor(image_raw, cv2.COLOR_BGR2RGB)

# Calculate widht and height and paddings

r_w = INPUT_W / w

r_h = INPUT_H / h

if r_h > r_w:

tw = INPUT_W

th = int(r_w * h)

tx1 = tx2 = 0

ty1 = int((INPUT_H - th) / 2)

ty2 = INPUT_H - th - ty1

else:

tw = int(r_h * w)

th = INPUT_H

tx1 = int((INPUT_W - tw) / 2)

tx2 = INPUT_W - tw - tx1

ty1 = ty2 = 0

# Resize the image with long side while maintaining ratio

image = cv2.resize(image, (tw, th))

# Pad the short side with (128,128,128)

image = cv2.copyMakeBorder(

image, ty1, ty2, tx1, tx2, cv2.BORDER_CONSTANT, (128, 128, 128)

)

image = image.astype(np.float32)

# Normalize to [0,1]

image /= 255.0

# HWC to CHW format:

image = np.transpose(image, [2, 0, 1])

# CHW to NCHW format

image = np.expand_dims(image, axis=0)

# Convert the image to row-major order, also known as "C order":

image = np.ascontiguousarray(image)

return image, image_raw, h, w

def xywh2xyxy(self, origin_h, origin_w, x):

"""

description: Convert nx4 boxes from [x, y, w, h] to [x1, y1, x2, y2] where xy1=top-left, xy2=bottom-right

param:

origin_h: height of original image

origin_w: width of original image

x: A boxes tensor, each row is a box [center_x, center_y, w, h]

return:

y: A boxes tensor, each row is a box [x1, y1, x2, y2]

"""

y = torch.zeros_like(x) if isinstance(x, torch.Tensor) else np.zeros_like(x)

r_w = INPUT_W / origin_w

r_h = INPUT_H / origin_h

if r_h > r_w:

y[:, 0] = x[:, 0] - x[:, 2] / 2

y[:, 2] = x[:, 0] + x[:, 2] / 2

y[:, 1] = x[:, 1] - x[:, 3] / 2 - (INPUT_H - r_w * origin_h) / 2

y[:, 3] = x[:, 1] + x[:, 3] / 2 - (INPUT_H - r_w * origin_h) / 2

y /= r_w

else:

y[:, 0] = x[:, 0] - x[:, 2] / 2 - (INPUT_W - r_h * origin_w) / 2

y[:, 2] = x[:, 0] + x[:, 2] / 2 - (INPUT_W - r_h * origin_w) / 2

y[:, 1] = x[:, 1] - x[:, 3] / 2

y[:, 3] = x[:, 1] + x[:, 3] / 2

y /= r_h

return y

def post_process(self, output, origin_h, origin_w):

"""

description: postprocess the prediction

param:

output: A tensor likes [num_boxes,cx,cy,w,h,conf,cls_id, cx,cy,w,h,conf,cls_id, ...]

origin_h: height of original image

origin_w: width of original image

return:

result_boxes: finally boxes, a boxes tensor, each row is a box [x1, y1, x2, y2]

result_scores: finally scores, a tensor, each element is the score correspoing to box

result_classid: finally classid, a tensor, each element is the classid correspoing to box

"""

# Get the num of boxes detected

num = int(output[0])

# Reshape to a two dimentional ndarray

pred = np.reshape(output[1:], (-1, 6))[:num, :]

# to a torch Tensor

pred = torch.Tensor(pred).cuda()

# Get the boxes

boxes = pred[:, :4]

# Get the scores

scores = pred[:, 4]

# Get the classid

classid = pred[:, 5]

# Choose those boxes that score > CONF_THRESH

si = scores > CONF_THRESH

boxes = boxes[si, :]

scores = scores[si]

classid = classid[si]

# Trandform bbox from [center_x, center_y, w, h] to [x1, y1, x2, y2]

boxes = self.xywh2xyxy(origin_h, origin_w, boxes)

# Do nms

indices = torchvision.ops.nms(boxes, scores, iou_threshold=IOU_THRESHOLD).cpu()

result_boxes = boxes[indices, :].cpu()

result_scores = scores[indices].cpu()

result_classid = classid[indices].cpu()

return result_boxes, result_scores, result_classid

class myThread(threading.Thread):

def __init__(self, func, args):

threading.Thread.__init__(self)

self.func = func

self.args = args

def run(self):

self.func(*self.args)

if __name__ == "__main__":

# load custom plugins #建议使用绝对地址

PLUGIN_LIBRARY = "/home/nvidia/Desktop/tensorrtx-yolov5-v4.0/yolov5/build/libmyplugins.so"

ctypes.CDLL(PLUGIN_LIBRARY)

engine_file_path = "/home/nvidia/Desktop/tensorrtx-yolov5-v4.0/yolov5/build/yolov5s.engine"

# load coco labels

categories = ["person", "bicycle", "car", "motorcycle", "airplane", "bus", "train", "truck", "boat",

"traffic light",

"fire hydrant", "stop sign", "parking meter", "bench", "bird", "cat", "dog", "horse", "sheep", "cow",

"elephant", "bear", "zebra", "giraffe", "backpack", "umbrella", "handbag", "tie", "suitcase",

"frisbee",

"skis", "snowboard", "sports ball", "kite", "baseball bat", "baseball glove", "skateboard",

"surfboard",

"tennis racket", "bottle", "wine glass", "cup", "fork", "knife", "spoon", "bowl", "banana", "apple",

"sandwich", "orange", "broccoli", "carrot", "hot dog", "pizza", "donut", "cake", "chair", "couch",

"potted plant", "bed", "dining table", "toilet", "tv", "laptop", "mouse", "remote", "keyboard",

"cell phone",

"microwave", "oven", "toaster", "sink", "refrigerator", "book", "clock", "vase", "scissors",

"teddy bear",

"hair drier", "toothbrush"]

# a YoLov5TRT instance

yolov5_wrapper = YoLov5TRT(engine_file_path)

url = '写自己的网络摄像头的IP地址'

cap = cv2.VideoCapture(url)

while 1:

ret, image = cap.read()

#在运行程序读取视频流的时候会出现断流的情况,这里加个if语句,当程序没有读取到图像的时候,对网络摄像头进行再次调用

if not (ret):

cap = cv2.VideoCapture(url)

ret, image = cap.read()

continue

img = yolov5_wrapper.infer(image)

cv2.imshow("result", img)

if cv2.waitKey(1) & 0XFF == ord('q'): # 1 millisecond

break

cap.release()

cv2.destroyAllWindows()

yolov5_wrapper.destroy()

接着执行如下命令

conda activate yolov5env python yolo_trt_test.py

总结

加速后的yolov5速度确实很快,完全能够在Jetson AGX Xavier板卡上进行实时检测。在部署的过程中踩了一些坑,在文中我没有详细的列出来,其实出现问题也好解决,有问题就Google。非常感谢这位大佬的文章,其实我写的很多东西跟他的文章类似,只是我是根据自己的需求做了一些改动。